Who This Article Is For — And a Warning

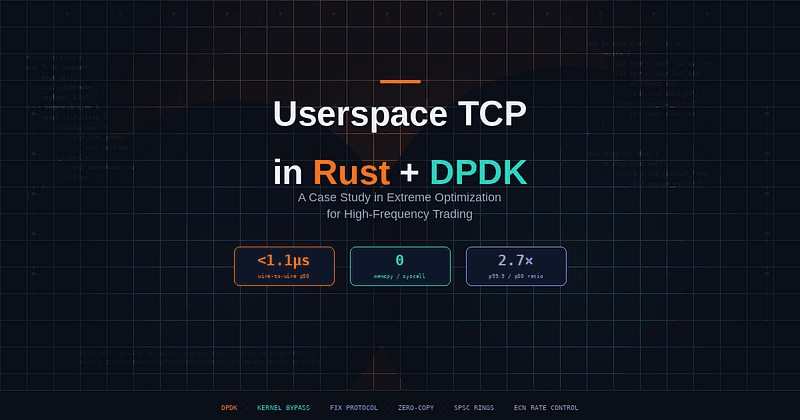

This article walks through the design and implementation of a custom, minimal TCP handler in Rust using DPDK kernel bypass, built for an equities market-making platform. The code is real, the design decisions are reasoned, and the performance numbers are measured.

However: this is a case study in extremes, not a tutorial. Building a custom TCP stack is almost certainly not how you should approach low-latency networking, even in HFT. Before you read further, here’s a decision tree:

Use the standard kernel stack (with tuning) if your latency budget is above 5µs. A properly configured kernel with SO_BUSY_POLL, TCP_NODELAY, IRQ affinity, isolcpus, and nohz_full achieves 5–8µs RTT in colocation environments. That's sufficient for the vast majority of quantitative trading strategies.

Use an existing kernel-bypass TCP library — Solarflare OpenOnload, Mellanox VMA, or a DPDK-based TCP stack like F-Stack — if you need 1–3µs and want to preserve the BSD socket API. These have been battle-tested for over a decade, handle the edge cases we’re about to deliberately ignore, and cost you zero engineering headcount to maintain. This is what most HFT firms actually use.

Consider a custom userspace stack only if all of these are true:

- You have 3+ full-time engineers dedicated to network stack development and maintenance.

- You’ve already deployed OpenOnload or equivalent and profiled its overhead.

- Your edge is specifically in wire-to-wire latency (not strategy computation, not colocation hardware).

- You’re willing to accept occasional protocol-induced disconnections that a full TCP stack would handle.

- Compliance or vendor policy prevents you from using third-party networking code.

Consider FPGA-based networking if you need sub-100ns latency. At that point, software — even kernel-bypass software — is the bottleneck, and this entire article is irrelevant to you.

With that framing established: here’s how we built ours, why we made the choices we did, and where those choices hurt us.

The Latency Landscape: Honest Numbers

The common narrative in kernel-bypass advocacy compares a hand-tuned userspace stack against a default, untuned Linux kernel and claims 30–50× improvement. That’s misleading. Here’s what we actually measured on identical hardware (Mellanox ConnectX-5, Intel Xeon Gold 6254, direct cross-connect to exchange gateway) across three configurations:

Configuration Median RTT p99 RTT p99.9 RTT

──────────────────────────────────────────────────────────────────────────

Default kernel (Ubuntu 22.04, epoll) 28 µs 85 µs 210 µs

Tuned kernel (busy-poll, isolcpus,

IRQ pinning, TCP_NODELAY) 6.2 µs 11 µs 24 µs

Custom DPDK userspace stack 1.1 µs 1.8 µs 2.9 µs

──────────────────────────────────────────────────────────────────────────

The real improvement over a tuned kernel is roughly 5–6× on median and 6–8× on tail latency. Not 30×. That 5× matters enormously when your strategy’s alpha decays on a microsecond timescale — but it’s a fundamentally different argument than “the kernel is unusable.” The kernel is fine. We needed better than fine.

The tail latency improvement is arguably more important than the median. The tuned kernel’s p99.9 of 24µs means that one in a thousand packets hits a scheduling hiccup, a TLB miss on a non-hugepage buffer, or an interrupt that slipped through isolcpus. In a system processing 500,000 market data ticks per second, that's 500 ticks per second arriving late. With the DPDK stack, the p99.9 is 2.9µs — the distribution is tight because there's simply nothing in the path that can introduce jitter.

Architecture Overview

A kernel-bypass trading system restructures how the application interacts with the network. The NIC is unbound from the kernel driver entirely and handed to a userspace framework that manages it directly via DMA ring buffers mapped into the process’s address space.

Traditional Stack Kernel Bypass Stack

┌──────────────┐ ┌──────────────┐

│ Application │ │ Application │

├──────────────┤ ├──────────────┤

│ Socket API │ │ Custom TCP │

├──────────────┤ │ State Machine│

│ TCP/IP │ ├──────────────┤

│ (kernel) │ │ DPDK / PMD │

├──────────────┤ │ (userspace) │

│ NIC Driver │ ├──────────────┤

│ (kernel) │ │ NIC HW │

├──────────────┤ │ (DMA rings) │

│ NIC HW │ └──────────────┘

└──────────────┘

~6-8µs (tuned) ~1-2µs

~28µs (default)

The key components of our Rust-based bypass stack:

- DPDK Poll-Mode Driver (PMD) — drives the NIC in userspace via

dpdk-sysFFI bindings, polling receive queues in a tight loop with zero interrupt overhead. - Custom TCP State Machine — a minimal, allocation-free TCP implementation that handles only the connection patterns we need (long-lived FIX sessions), deliberately trading generality for speed.

- Zero-Copy Packet Pipeline — packets are parsed in-place from DPDK mbufs, with the application reading directly from DMA-mapped memory.

- Core-Pinned Event Loop — a single-threaded, busy-polling reactor pinned to an isolated CPU core.

Hardware and OS Prerequisites

Before writing a single line of Rust, the host must be configured for deterministic operation. These steps apply equally to kernel-bypass and tuned-kernel setups — if you haven’t done these, start here before considering DPDK.

BIOS/Firmware Configuration

# Critical BIOS settings for latency-sensitive workloads:

- Disable Hyper-Threading (SMT) # Eliminates L1 cache contention

- Disable C-States (all except C0) # Prevents core sleep/wake latency

- Disable P-States / SpeedStep # Locks CPU frequency

- Disable NUMA interleaving # Ensures local memory access

- Enable VT-d / IOMMU # Required for DPDK VFIO

- Set power profile to "Maximum Perf" # Prevents firmware throttling

Linux Kernel Tuning

# Isolate CPU cores 2-5 from the scheduler

# Core 2: NIC RX poll loop

# Core 3: TCP processing + strategy

# Core 4: Order transmission

# Core 5: Logging / telemetry (non-critical path)

GRUB_CMDLINE_LINUX="isolcpus=2-5 nohz_full=2-5 rcu_nocbs=2-5 \

intel_pstate=disable processor.max_cstate=0 idle=poll \

hugepagesz=1G hugepages=4 iommu=pt intel_iommu=on \

nosmt transparent_hugepage=never"

# After boot: verify isolation

cat /sys/devices/system/cpu/isolated

# Expected: 2-5

# Disable irqbalance and pin NIC interrupts away from trading cores

systemctl stop irqbalance

for irq in $(grep eth0 /proc/interrupts | awk '{print $1}' | tr -d ':'); do

echo 1 > /proc/irq/$irq/smp_affinity # Pin to core 0

done

Hugepage Configuration

DPDK requires hugepages for its memory pools. 1GB pages eliminate TLB misses for packet buffer access:

# Verify hugepages

grep -i huge /proc/meminfo

# HugePages_Total: 4

# HugePages_Free: 4

# Hugepagesize: 1048576 kB

mount -t hugetlbfs nodev /dev/hugepages -o pagesize=1G

NIC Binding

# Unbind NIC from kernel driver, bind to VFIO for DPDK

dpdk-devbind.py --bind=vfio-pci 0000:03:00.0

Project Structure and DPDK Bindings

We use raw FFI bindings to DPDK rather than a higher-level wrapper, giving full control over memory management and packet processing semantics.

hft-tcp/

├── Cargo.toml

├── build.rs # DPDK linkage configuration

├── src/

│ ├── main.rs # Entry point, EAL init, core launch

│ ├── dpdk/

│ │ ├── mod.rs

│ │ ├── ffi.rs # Raw DPDK FFI bindings

│ │ ├── mbuf.rs # Zero-copy mbuf wrapper

│ │ └── port.rs # NIC port configuration

│ ├── net/

│ │ ├── mod.rs

│ │ ├── ethernet.rs # Ethernet frame parsing

│ │ ├── ip.rs # IPv4 header parsing

│ │ ├── tcp.rs # TCP state machine

│ │ ├── arp.rs # ARP responder

│ │ └── checksum.rs # Hardware-offloaded checksums

│ ├── protocol/

│ │ ├── mod.rs

│ │ └── fix.rs # FIX protocol parser

│ ├── engine/

│ │ ├── mod.rs

│ │ └── event_loop.rs # Core poll loop

│ └── mem/

│ ├── mod.rs

│ ├── pool.rs # Object pool allocator

│ └── ring.rs # Lock-free SPSC ring buffer

Build Configuration

# Cargo.toml

[package]

name = "hft-tcp"

version = "0.1.0"

edition = "2021"

[dependencies]

libc = "0.2"

[build-dependencies]

pkg-config = "0.3"

bindgen = "0.69"

[profile.release]

opt-level = 3

lto = "fat"

codegen-units = 1

panic = "abort" # No unwinding overhead on the hot path

target-cpu = "native" # Use all available CPU instructions

// build.rs

fn main() {

let dpdk_libs = [

"rte_eal", "rte_mempool", "rte_mbuf", "rte_ring",

"rte_ethdev", "rte_net", "rte_hash", "rte_timer",

];

for lib in &dpdk_libs {

println!("cargo:rustc-link-lib={lib}");

}

let dpdk = pkg_config::Config::new()

.probe("libdpdk")

.expect("DPDK not found via pkg-config");

for path in &dpdk.include_paths {

println!("cargo:include={}", path.display());

}

println!("cargo:rustc-link-lib=numa");

println!("cargo:rustc-link-lib=pthread");

}

Zero-Copy Mbuf Abstraction

DPDK’s rte_mbuf is the fundamental packet buffer — a metadata header pointing into DMA-mapped hugepage memory where the NIC deposits raw bytes. Our Rust wrapper provides safe, zero-copy access without allocation on the critical path.

A note on the safety claim here: the Drop implementation guarantees that every mbuf is returned to its mempool on every code path. This isn't the borrow checker verifying DPDK's invariants — it can't do that across FFI. What it does is prevent the specific class of bug where a C programmer adds an early return and forgets the goto out_free. That's a narrow but real advantage. The unsafe at the boundary is still manual and still your responsibility. Rust doesn't make DPDK safe; it makes the wrapper harder to misuse.

// src/dpdk/mbuf.rs

use std::ptr::NonNull;

use std::slice;

/// Zero-copy wrapper around DPDK's rte_mbuf.

///

/// This type does NOT implement Clone - mbufs are unique resources

/// that must be explicitly freed back to their mempool.

pub struct Mbuf {

raw: NonNull<dpdk_ffi::rte_mbuf>,

}

// SAFETY: Mbufs are pinned to a single core's mempool and never shared

// across threads. The Send impl is required because the Mbuf moves from

// the RX burst call into the event loop, but this happens on a single core.

unsafe impl Send for Mbuf {}

impl Mbuf {

/// Wrap a raw mbuf pointer received from rte_eth_rx_burst.

///

/// # Safety

/// The pointer must be a valid, non-null mbuf from a DPDK mempool.

/// Caller must ensure the mbuf is not accessed through any other pointer

/// for the lifetime of this wrapper.

#[inline(always)]

pub unsafe fn from_raw(ptr: *mut dpdk_ffi::rte_mbuf) -> Self {

Self {

raw: NonNull::new_unchecked(ptr),

}

}

/// Returns a slice over the packet data, starting from the

/// current data offset. Points directly into DMA memory -

/// no copies performed.

#[inline(always)]

pub fn data(&self) -> &[u8] {

unsafe {

let mbuf = self.raw.as_ref();

let data_ptr = (mbuf.buf_addr as *const u8).add(mbuf.data_off as usize);

slice::from_raw_parts(data_ptr, mbuf.data_len as usize)

}

}

/// Mutable slice over packet data for in-place modification

/// (e.g., swapping MAC addresses for response packets).

#[inline(always)]

pub fn data_mut(&mut self) -> &mut [u8] {

unsafe {

let mbuf = self.raw.as_mut();

let data_ptr = (mbuf.buf_addr as *mut u8).add(mbuf.data_off as usize);

slice::from_raw_parts_mut(data_ptr, mbuf.data_len as usize)

}

}

#[inline(always)]

pub fn pkt_len(&self) -> u32 {

unsafe { self.raw.as_ref().pkt_len }

}

/// Prepend space to the packet (move data_off backward).

#[inline(always)]

pub fn prepend(&mut self, len: u16) -> Option<&mut [u8]> {

unsafe {

let mbuf = self.raw.as_mut();

if mbuf.data_off < len {

return None;

}

mbuf.data_off -= len;

mbuf.data_len += len;

mbuf.pkt_len += len as u32;

Some(slice::from_raw_parts_mut(

(mbuf.buf_addr as *mut u8).add(mbuf.data_off as usize),

len as usize,

))

}

}

/// Consume the wrapper and return the raw pointer.

/// Used when passing mbufs to rte_eth_tx_burst.

#[inline(always)]

pub fn into_raw(self) -> *mut dpdk_ffi::rte_mbuf {

let ptr = self.raw.as_ptr();

std::mem::forget(self); // Don't run Drop

ptr

}

}

impl Drop for Mbuf {

fn drop(&mut self) {

unsafe {

dpdk_ffi::rte_pktmbuf_free(self.raw.as_ptr());

}

}

}

Zero-Copy Packet Parsing

With raw bytes accessible through the mbuf, we parse Ethernet, IP, and TCP headers by casting byte slices into header references in place. No allocation, no copying — each parse compiles to a pointer cast and a bounds check (~2–5 nanoseconds for all three layers).

// src/net/ethernet.rs

pub const ETH_HEADER_LEN: usize = 14;

pub const ETHERTYPE_IPV4: u16 = 0x0800;

pub const ETHERTYPE_ARP: u16 = 0x0806;

#[repr(C, packed)]

#[derive(Clone, Copy)]

pub struct EthHeader {

pub dst_mac: [u8; 6],

pub src_mac: [u8; 6],

pub ethertype: u16,

}

impl EthHeader {

#[inline(always)]

pub fn parse(data: &[u8]) -> Option<&Self> {

if data.len() < ETH_HEADER_LEN {

return None;

}

// SAFETY: EthHeader is repr(C, packed) and we've verified length.

Some(unsafe { &*(data.as_ptr() as *const Self) })

}

#[inline(always)]

pub fn ethertype(&self) -> u16 {

u16::from_be(self.ethertype)

}

}

// src/net/ip.rs

pub const IP_HEADER_MIN_LEN: usize = 20;

#[repr(C, packed)]

#[derive(Clone, Copy)]

pub struct Ipv4Header {

pub version_ihl: u8,

pub dscp_ecn: u8,

pub total_length: u16,

pub identification: u16,

pub flags_fragment: u16,

pub ttl: u8,

pub protocol: u8,

pub checksum: u16,

pub src_addr: u32,

pub dst_addr: u32,

}

impl Ipv4Header {

#[inline(always)]

pub fn parse(data: &[u8]) -> Option<&Self> {

if data.len() < IP_HEADER_MIN_LEN {

return None;

}

Some(unsafe { &*(data.as_ptr() as *const Self) })

}

#[inline(always)]

pub fn header_len(&self) -> usize {

((self.version_ihl & 0x0F) as usize) * 4

}

#[inline(always)]

pub fn total_length(&self) -> u16 {

u16::from_be(self.total_length)

}

#[inline(always)]

pub fn ecn(&self) -> u8 {

self.dscp_ecn & 0x03

}

#[inline(always)]

pub fn protocol(&self) -> u8 {

self.protocol

}

}

// src/net/tcp.rs (header parsing)

pub const TCP_HEADER_MIN_LEN: usize = 20;

pub const TCP_FIN: u8 = 0x01;

pub const TCP_SYN: u8 = 0x02;

pub const TCP_RST: u8 = 0x04;

pub const TCP_PSH: u8 = 0x08;

pub const TCP_ACK: u8 = 0x10;

pub const TCP_ECE: u8 = 0x40;

pub const TCP_CWR: u8 = 0x80;

#[repr(C, packed)]

#[derive(Clone, Copy)]

pub struct TcpHeader {

pub src_port: u16,

pub dst_port: u16,

pub seq_num: u32,

pub ack_num: u32,

pub data_offset_flags: u16,

pub window: u16,

pub checksum: u16,

pub urgent_ptr: u16,

}

impl TcpHeader {

#[inline(always)]

pub fn parse(data: &[u8]) -> Option<&Self> {

if data.len() < TCP_HEADER_MIN_LEN {

return None;

}

Some(unsafe { &*(data.as_ptr() as *const Self) })

}

#[inline(always)]

pub fn src_port(&self) -> u16 { u16::from_be(self.src_port) }

#[inline(always)]

pub fn dst_port(&self) -> u16 { u16::from_be(self.dst_port) }

#[inline(always)]

pub fn seq_num(&self) -> u32 { u32::from_be(self.seq_num) }

#[inline(always)]

pub fn ack_num(&self) -> u32 { u32::from_be(self.ack_num) }

#[inline(always)]

pub fn data_offset(&self) -> usize {

((u16::from_be(self.data_offset_flags) >> 12) as usize) * 4

}

#[inline(always)]

pub fn flags(&self) -> u8 {

(u16::from_be(self.data_offset_flags) & 0xFF) as u8

}

#[inline(always)]

pub fn has_flag(&self, flag: u8) -> bool {

self.flags() & flag != 0

}

#[inline(always)]

pub fn window(&self) -> u16 { u16::from_be(self.window) }

}

TCP State Machine: Minimal by Design

A general-purpose TCP implementation handles dozens of edge cases: simultaneous open, urgent data, silly window syndrome, Nagle’s algorithm, slow start, congestion avoidance, and more. Our stack handles exactly the connection patterns that matter: long-lived, pre-established sessions to known exchange gateways, carrying FIX messages over reliable datacenter links.

This section is honest about what we cut, why we cut it, and — crucially — where those cuts have bitten us.

// src/net/tcp.rs (state machine)

#[derive(Debug, Clone, Copy, PartialEq, Eq)]

pub enum TcpState {

Closed,

SynSent,

Established,

FinWait1,

FinWait2,

TimeWait,

}

/// 4-tuple connection identifier. Used as lookup key via perfect hashing.

#[derive(Debug, Clone, Copy, Hash, PartialEq, Eq)]

pub struct ConnKey {

pub local_addr: u32,

pub local_port: u16,

pub remote_addr: u32,

pub remote_port: u16,

}

/// Single TCP connection's state.

///

/// Hot fields are packed into the first cache line (64 bytes).

/// Cold fields (retransmit queue, stats) follow.

#[repr(C)]

pub struct TcpConnection {

// === Cache line 0: Hot path (64 bytes) ===

pub key: ConnKey, // 12 bytes

pub state: TcpState, // 1 byte

_pad0: [u8; 3], // 3 bytes

pub snd_nxt: u32, // 4 bytes - next sequence to send

pub snd_una: u32, // 4 bytes - oldest unacknowledged

pub rcv_nxt: u32, // 4 bytes - next expected receive seq

pub rcv_wnd: u16, // 2 bytes - our advertised window

pub snd_wnd: u16, // 2 bytes - peer's advertised window

pub last_ack_sent: u32, // 4 bytes

pub last_activity_tsc: u64, // 8 bytes - TSC of last packet

pub rtt_estimate_ns: u32, // 4 bytes - smoothed RTT

pub ecn_ce_count: u32, // 4 bytes - ECN congestion signals

_pad1: [u8; 8], // 8 bytes to fill cache line

// === Cache line 1+: Cold path ===

pub retransmit_queue: RetransmitQueue,

pub stats: ConnStats,

pub rate_state: RateState,

}

/// Lightweight rate control based on ECN signals.

/// NOT full congestion control - just enough to back off when

/// the switch signals queue buildup via ECN CE marks.

pub struct RateState {

/// Target inter-packet gap in TSC ticks. Increased on ECN CE,

/// decayed back toward zero when signals clear.

pub send_gap_tsc: u64,

/// Timestamp of last ECN CE received.

pub last_ce_tsc: u64,

/// Number of CE marks in current measurement window.

pub ce_in_window: u32,

/// Total packets in current measurement window.

pub packets_in_window: u32,

}

pub struct RetransmitQueue {

entries: [Option<RetransmitEntry>; 16], // Fixed-size, no heap allocation

head: usize,

len: usize,

}

pub struct RetransmitEntry {

pub seq: u32,

pub data_len: u16,

pub header_template: [u8; 66], // Pre-built ETH+IP+TCP headers

pub sent_tsc: u64,

pub retransmit_count: u8,

}

#[derive(Default)]

pub struct ConnStats {

pub packets_rx: u64,

pub packets_tx: u64,

pub bytes_rx: u64,

pub bytes_tx: u64,

pub retransmits: u64,

pub ecn_ce_marks: u64,

}

impl TcpConnection {

/// Process an inbound TCP segment and return an action.

#[inline(always)]

pub fn on_segment(

&mut self,

ip: &Ipv4Header,

tcp: &TcpHeader,

payload: &[u8],

now_tsc: u64,

) -> SegmentAction {

self.last_activity_tsc = now_tsc;

self.stats.packets_rx += 1;

self.stats.bytes_rx += payload.len() as u64;

// Check ECN Congestion Experienced (CE) in IP header

if ip.ecn() == 0b11 {

self.handle_ecn_ce(now_tsc);

}

match self.state {

TcpState::Established => self.handle_established(tcp, payload),

TcpState::SynSent => self.handle_syn_sent(tcp),

TcpState::FinWait1 => self.handle_fin_wait1(tcp),

TcpState::FinWait2 => self.handle_fin_wait2(tcp),

_ => SegmentAction::Drop,

}

}

#[inline(always)]

fn handle_established(

&mut self,

tcp: &TcpHeader,

payload: &[u8],

) -> SegmentAction {

let seg_seq = tcp.seq_num();

let seg_ack = tcp.ack_num();

let flags = tcp.flags();

// Peer is signaling that it received our ECN CE echo

if flags & TCP_CWR != 0 {

// Peer acknowledged our ECE - we can stop echoing

}

// Fast path: in-order data with ACK

if seg_seq == self.rcv_nxt && flags & TCP_ACK != 0 {

if Self::seq_gt(seg_ack, self.snd_una)

&& Self::seq_leq(seg_ack, self.snd_nxt)

{

self.acknowledge_up_to(seg_ack);

}

self.snd_wnd = tcp.window();

if !payload.is_empty() {

self.rcv_nxt = self.rcv_nxt.wrapping_add(payload.len() as u32);

// Echo ECE flag if we've seen CE marks

let echo_ece = self.ecn_ce_count > 0;

return SegmentAction::Deliver {

ack_immediately: flags & TCP_PSH != 0,

echo_ece,

};

}

return SegmentAction::Consumed;

}

if flags & TCP_FIN != 0 && seg_seq == self.rcv_nxt {

self.rcv_nxt = self.rcv_nxt.wrapping_add(1);

self.state = TcpState::TimeWait;

return SegmentAction::SendAck;

}

if flags & TCP_RST != 0 {

self.state = TcpState::Closed;

return SegmentAction::ConnectionReset;

}

// Out-of-order - send duplicate ACK

SegmentAction::SendDuplicateAck

}

fn handle_syn_sent(&mut self, tcp: &TcpHeader) -> SegmentAction {

if tcp.has_flag(TCP_SYN) && tcp.has_flag(TCP_ACK) {

self.rcv_nxt = tcp.seq_num().wrapping_add(1);

self.snd_una = tcp.ack_num();

self.snd_wnd = tcp.window();

self.state = TcpState::Established;

return SegmentAction::SendAck;

}

SegmentAction::Drop

}

fn handle_fin_wait1(&mut self, tcp: &TcpHeader) -> SegmentAction {

if tcp.has_flag(TCP_ACK) {

self.state = TcpState::FinWait2;

}

if tcp.has_flag(TCP_FIN) {

self.rcv_nxt = tcp.seq_num().wrapping_add(1);

self.state = TcpState::TimeWait;

return SegmentAction::SendAck;

}

SegmentAction::Consumed

}

fn handle_fin_wait2(&mut self, tcp: &TcpHeader) -> SegmentAction {

if tcp.has_flag(TCP_FIN) {

self.rcv_nxt = tcp.seq_num().wrapping_add(1);

self.state = TcpState::TimeWait;

return SegmentAction::SendAck;

}

SegmentAction::Consumed

}

/// Handle ECN Congestion Experienced mark from IP header.

///

/// This is our minimal congestion response. When the switch marks

/// a packet with CE, it means queue depth is building. We increase

/// the inter-packet send gap to reduce our rate, similar to DCTCP's

/// approach but much simpler.

///

/// Why not skip this entirely? We tried. PFC storms on the exchange

/// switch caused 200ms pauses when our uncontrolled burst rate

/// triggered priority flow control. A 1µs inter-packet gap during

/// congestion costs us almost nothing in normal operation but

/// prevents cascading switch failures.

fn handle_ecn_ce(&mut self, now_tsc: u64) {

self.ecn_ce_count += 1;

self.stats.ecn_ce_marks += 1;

self.rate_state.ce_in_window += 1;

self.rate_state.last_ce_tsc = now_tsc;

// Increase send gap: DCTCP-inspired proportional response.

// ce_fraction = CE marks / total packets in window

// gap = base_gap * (1 + alpha * ce_fraction)

if self.rate_state.packets_in_window > 0 {

let ce_fraction = self.rate_state.ce_in_window as f64

/ self.rate_state.packets_in_window as f64;

// At 100% CE marking, impose a 2µs inter-packet gap (~6000 TSC at 3GHz)

self.rate_state.send_gap_tsc = (6000.0 * ce_fraction) as u64;

}

}

/// Called periodically to decay the send gap when CE signals clear.

pub fn decay_rate_control(&mut self, now_tsc: u64, tsc_hz: u64) {

// If no CE in the last 1ms, halve the send gap

let one_ms_tsc = tsc_hz / 1000;

if now_tsc.wrapping_sub(self.rate_state.last_ce_tsc) > one_ms_tsc {

self.rate_state.send_gap_tsc /= 2;

self.rate_state.ce_in_window = 0;

self.rate_state.packets_in_window = 0;

}

}

#[inline(always)]

fn acknowledge_up_to(&mut self, ack: u32) {

self.snd_una = ack;

// Retire acknowledged entries from retransmit queue

while self.retransmit_queue.len > 0 {

if let Some(front) = self.retransmit_queue.peek_front() {

let end_seq = front.seq.wrapping_add(front.data_len as u32);

if Self::seq_leq(end_seq, ack) {

self.retransmit_queue.pop_front();

} else {

break;

}

} else {

break;

}

}

}

#[inline(always)]

fn seq_gt(a: u32, b: u32) -> bool {

(a.wrapping_sub(b) as i32) > 0

}

#[inline(always)]

fn seq_leq(a: u32, b: u32) -> bool {

(a.wrapping_sub(b) as i32) <= 0

}

}

#[derive(Debug)]

pub enum SegmentAction {

Deliver { ack_immediately: bool, echo_ece: bool },

SendAck,

Consumed,

SendDuplicateAck,

ConnectionReset,

Drop,

}

What We Deliberately Omit — And What Bit Us

Feature Why omitted Risk Our experience Nagle’s algorithm Every message is latency-critical; never coalesce None in practice No issues Delayed ACKs We ACK immediately on PSH, batch otherwise Minor bandwidth overhead No issues Slow start Dedicated link, no shared path Microburst at switch ingress See congestion section Full congestion control Too much latency overhead Packet loss → retransmit-only recovery PFC storms — added ECN response Out-of-order reassembly Direct cable, reordering shouldn’t happen Silent data corruption if assumptions wrong One incident in 18 months Silly window avoidance FIX messages are request/response None No issues TIME_WAIT recycling Persistent connections Reconnection delay Acceptable

The congestion control omission was our most expensive mistake. In month four of production, a firmware bug on the exchange’s ingress switch caused it to assert Priority Flow Control (PFC) pause frames when our burst rate exceeded its shallow buffer during a volatility spike. The PFC pause propagated back to our NIC, which dutifully paused all transmission for 200ms — an eternity. We lost significant P&L on the positions we couldn’t hedge during the pause.

The fix was the lightweight ECN-based rate control shown above. It adds roughly 3 nanoseconds of overhead per packet (one branch on ip.ecn(), one counter increment) and has prevented recurrence. The lesson: "no shared infrastructure to congest" is a fiction. Even a dedicated cross-connect passes through switch silicon with finite buffer depth.