Software development is a process that not only demands proficiency in various coding languages but also an understanding of specific theoretical principles that can drastically affect a program’s performance.

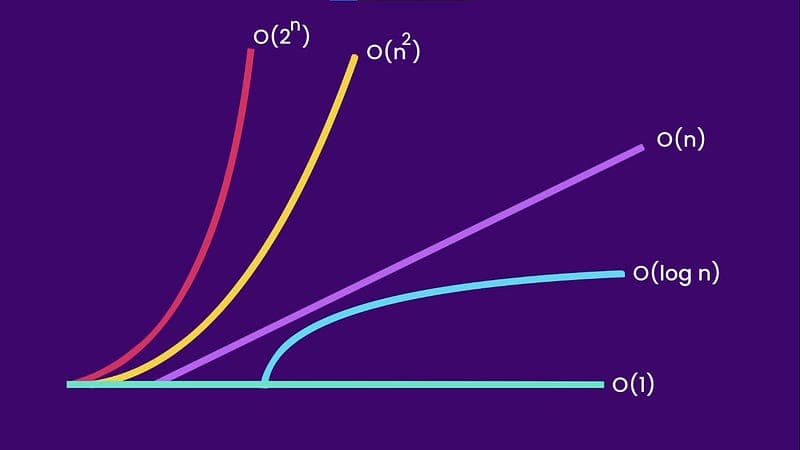

One of the most critical concepts in this realm is the Big O notation, a mathematical notation that describes the limiting behavior of a function, particularly when the argument tends towards a specific value or infinity. This notation is an instrumental tool used in computer science to describe an algorithm’s performance or complexity.

Understanding the Big O Notation

The Big O notation provides a high-level understanding of an algorithm’s time complexity, which is the computational complexity that describes the amount of computer time taken by an algorithm to run, as a function of the size of the input to the program.

It essentially answers the question: how does the run-time grow as the size of the input grows? This question is vital because it allows software developers to assess the efficiency of algorithms and identify potential bottlenecks or areas for improvement.

It’s crucial to note that Big O notation doesn’t measure the speed of an algorithm. Instead, it measures how quickly the run-time grows, based on the input size.

The Different Levels of Big O Notation

There are different levels of Big O notation that each represent different levels of complexity:

- O(1): Also known as constant time complexity. No matter the size of the input, the time to complete the task remains the same. A typical example is accessing an element from an array using an index.

- O(n): This is linear time complexity. The time to complete the task grows linearly with the size of the input. An example is looping through an array to find a specific value.

- O(n²): This is quadratic time complexity. The time to complete the task is proportional to the square of the input size. Common examples include nested loops or bubble sort algorithm.

- O(log n): Also known as logarithmic time complexity. The time to complete the task increases logarithmically with the input size. This is seen in algorithms like binary search.

- O(n log n): This is linearithmic time complexity, where the time to complete the task increases linearly and logarithmically for small and large inputs respectively. Efficient sorting algorithms like mergesort or quicksort exhibit this kind of time complexity.

Examples of Big O Notation

Let’s look at some examples in Python:

O(1) — Constant Time Complexity

def get_first_item(items):

return items[0]

print(get_first_item([1, 2, 3, 4, 5]))

In this example, no matter the size of the list, we always use the same amount of time to retrieve the first item.

O(n) — Linear Time Complexity

def find_item(items, element):

for item in items:

if item == element:

return True

return False

print(find_item([1, 2, 3, 4, 5], 3))

Here, the time to find an item grows linearly with the size of the list. In the worst case scenario, we would have to look at every item in the list.

O(n²) — Quadratic Time Complexity